A new paper in Nature Methods from the Shroff Lab details a new and improved method for processing 3D fluorescence microscopy images. The new method, which incorporates information about the image formation process into a deep learning network, generates clearer images in much less time than conventional methods.

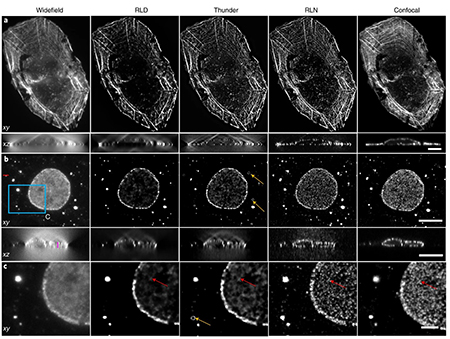

Traditionally, microscopists have used an algorithm borrowed from astronomers called Richardson-Lucy to deconvolve images generated by fluorescence microscopy. This algorithm relies on information about the physics of the microscope to de-blur the image. While this method works well for small samples and can be generalized to any microscope, it doesn’t learn anything about the sample that has been imaged, making it difficult to use on complex 3D and 4D images.

On the other hand, neural networks incorporate information about the underlying structure of the image to deconvolve more complex images much faster. But neural networks are only as good as the information about the structures that they have been shown, meaning they have a difficult time generalizing what they know about one structure to other structures in an image.

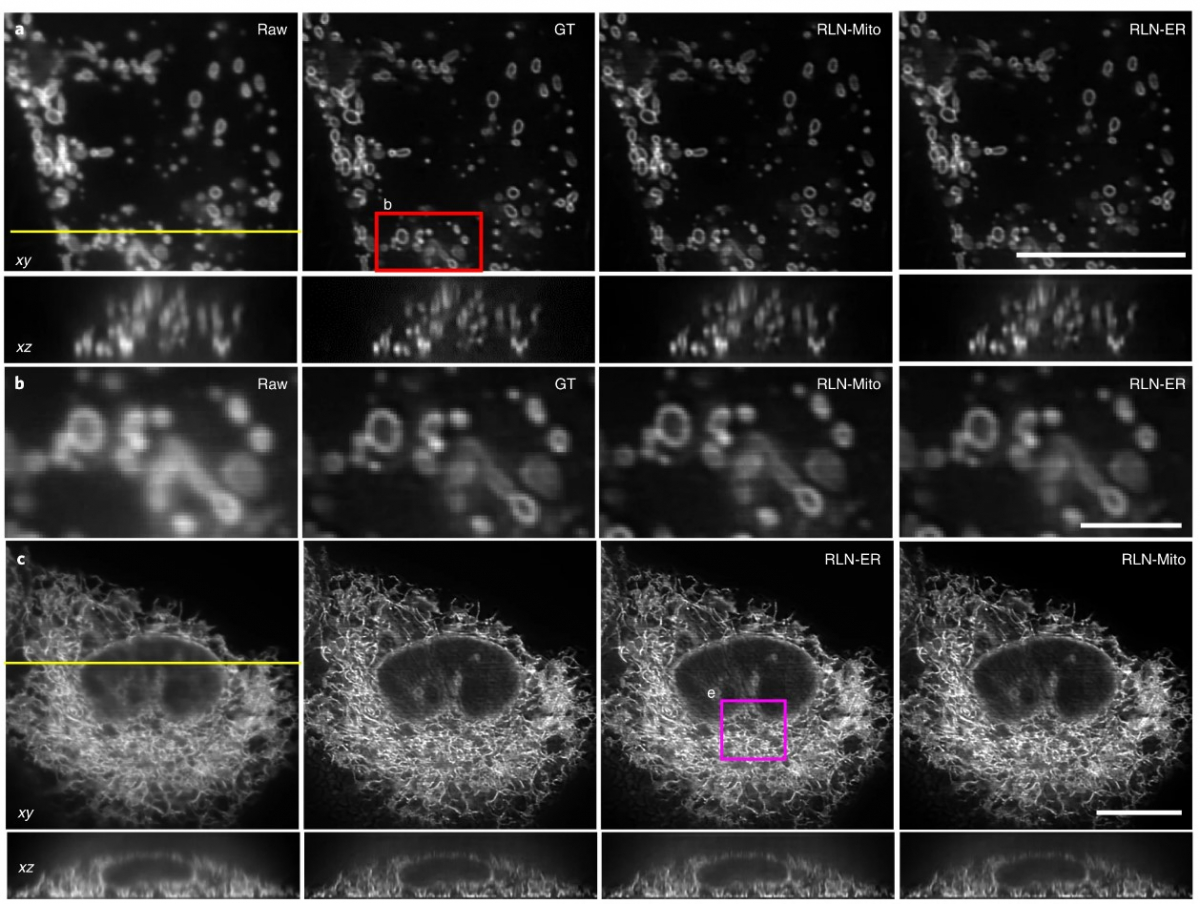

In the new research, led by scientists at NIH and Zhejiang University, researchers combined these two methods, the Richardson-Lucy algorithm and a neural network, to create a better and faster deconvolution method for 3D microscopy.

By incorporating information about the image formation process from the traditional algorithm into the neural network, the researchers were able to create a network that can both learn information about the underlying structures and generalize well to other structures in other images.

The result is a network that is four to 50 times faster, and provides better deconvolution, better generalizability and fewer artifacts than other networks. The new method, dubbed the Richardson-Lucy Network, could potentially be used on other 3D imaging techniques, and is a step toward building better and faster imaging techniques for biological research.

Shroff and his team, who moved from NIH to Janelia in August, plan to use the new method in conjunction with a new multiview microscope they are building at the research campus.

Citation:

Yue Li, Yijun Su, Min Guo, Xiaofei Han, Jiamin Liu, Harshad D. Vishwasrao, Xuesong Li, Ryan Christensen, Titas Sengupta, Mark W. Moyle, Ivan Rey-Suarez, Jiji Chen, Arpita Upadhyaya, Ted B. Usdin, Daniel Alfonso Colón-Ramos, Huafeng Liu, Yicong Wu and Hari Shroff. “Incorporating the image formation process into deep learning improves network performance.” Nature Methods, published October 31, 2022. DOI: 10.1038/s41592-022-01652-7

Media Contacts

Nanci Bompey