Imaging cells at nanometer resolution is now faster than ever

Using super-resolution microscopy, scientists can access previously hidden cellular worlds. These scopes smash the limits of previous microscopes, revealing nanometer-scale details inside of cells. The technology was such a boon for biologists that it led to a Nobel Prize in 2014.

Now, a new tool for processing super-resolution microscope data makes the technique ten times faster and twice as accurate, a team reports September 3, 2021, in Nature Methods.

That makes it more useful for monitoring activity in living cells, and for studying fragile samples that can break down under extended exposure to harsh light, says Srinivas Turaga, a group leader at HHMI’s Janelia Research Campus. Turaga co-led the project with Jakob Macke at the University of Tübingen and Jonas Ries at the European Molecular Biology Laboratory.

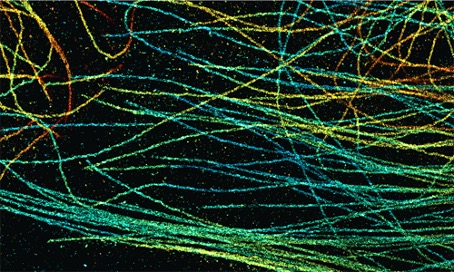

The researchers focused their efforts on improving a kind of super-resolution microscopy called single molecule localization microscopy. In this approach, scientists label structures of interest within cells using bright fluorescent molecules. They use light to activate just a few of those molecules. The scope scans the same sample many times, imaging different subsets of fluorescing molecules on each pass. Then, a computer program unscrambles all the data and compiles the complete image.

One of the biggest limitations of super-resolution microscopy is how long it takes to collect data, says Lucas-Raphael Müller, a graduate student in Ries’ lab. Scope users can’t activate too many fluorescent molecules at once, because the spots blur together and become impossible to parse. So it takes many passes to capture the whole sample.

The DECODE algorithm changes that. DECODE is a neural network – a computer program that learns from training data, and gets better over time as it sees more and more examples. In a head-to-head competition with 12 other previously published microscope algorithms, DECODE took the lead in both accuracy and speed, the researchers showed.

Instead of looking at frames in isolation like previous computer programs for super-resolution microscopes, DECODE uses information from the neighboring frames to get more context, says Artur Speiser, a PhD student in Macke and Turaga’s labs. In a single image, a few lit-up spots close together might look like a single molecule. But looking at neighboring frames might reveal parts of the fluorescent glow blinking off at different times, suggesting that they’re instead distinct entities.

Using DECODE, scientists can activate more fluorescent molecules in a single camera frame, taking fewer passes to get a complete picture. That makes the process much faster, Turaga says. “We showed that if you train your neural network to actually look at three frames at a time, and integrate information across these frames, you can do a much better job detecting and localizing molecules.”

The researchers also improved the way that the algorithm measures uncertainty in the data analysis. That improves the program’s accuracy over time, because it’s getting more detailed feedback about where its ID skills are great and where they might need improvement. “The better you can do at giving the neural network feedback, the better you can train it,” Turaga says.

Turaga and Macke’s teams both specialize in machine learning, and collaborating with the microscopy experts in Ries’s lab helped transform the tool into something usable by biologists, Turaga says. DECODE is now available for free online.

##

Citation:

Artur Speiser, Lucas-Raphael Müller, Ulf Matti, Christopher J. Obara, Wesley R. Legant, Anna Kreshuk, Jakob H. Macke, Jonas Ries, and Srinivas C. Turaga. “Deep learning enables fast and dense single-molecule localization with high accuracy,” Nature Methods, published online September 3, 2021. doi:10.1038/s41592-021-01236-x.